What TurboQuant Actually Means for AI Memory Stocks

On March 25, 2026, Google Research published a paper on a new compression algorithm called TurboQuant. Within hours, memory stocks were tanking. Cloudflare (NET) CEO Matthew Prince called it “Google’s DeepSeek moment” – and Wall Street took that as a sell signal.

Micron (MU), SanDisk (SNDK), Western Digital (WDC), and Seagate (STX) had been among the hottest stocks in the entire market, riding the AI memory bottleneck thesis. Each were up hundreds of percent as investors collectively woke up to a simple truth: you cannot build AI without memory, and there wasn’t nearly enough of it to go around.

Then came TurboQuant, and just like that, the hottest group in the market found itself in a selling frenzy.

Google’s TurboQuant targets something called the Key-Value (KV) cache – the working memory AI models use to store contextual information so they don’t have to recompute it with every new token they generate. As models process longer inputs, the KV cache grows rapidly, consuming GPU memory at an alarming rate. TurboQuant compresses that cache from 16 bits per value down to just 3 bits – a 6x reduction in memory footprint – with, per Google’s benchmarks, zero loss in model accuracy. No retraining required. No fine-tuning. It’s genuinely impressive; a real breakthrough.

So why aren’t we panicking? Because there is a very old and very reliable pattern in technology investing. An efficiency breakthrough gets announced. The market panics. Investors dump the stocks that allegedly benefit from inefficiency. And then, six to 12 months later, everyone quietly realizes they sold exactly the wrong thing at exactly the wrong time.

We think that’s exactly what’s happening now – and we’ll show you why.

The Bear Case for AI Memory Stocks After TurboQuant

Before we dismantle it, let’s give the bear case its due. The bears aren’t unintelligent – they’re just drawing the wrong conclusion from a real observation.

AI memory demand has been projected to grow explosively because of the KV cache. As context windows expand from 100,000 to 1 million-plus tokens, the KV cache grows proportionally, creating insatiable demand for high-bandwidth memory (HBM). That demand thesis is a huge part of why stocks like MU and SNDK ran so hard.

TurboQuant, if widely adopted, compresses the KV cache by 6x. So the bearish argument goes that if the KV cache is 6x smaller, we’ll need 6x less memory.

‘Memory demand – and memory stocks – will crater. Sell everything.’

Wells Fargo (WFC) analyst Andrew Rocha articulated this cleanly: if TurboQuant is adopted broadly, it quickly raises the question of how much memory capacity the industry actually needs.

That’s a fair question. It’s just that the answer isn’t what the bears think.

Why TurboQuant Will Increase AI Memory Demand, Not Reduce It

In 1865, British economist William Stanley Jevons noticed something counterintuitive about coal consumption in England.

You might expect that as steam engines became more efficient – requiring less coal to do the same work – coal consumption would fall. Instead, as Jevons observed, it exploded. More efficient engines made coal-powered applications cheaper to run, which unlocked a massive wave of new use cases that more than offset the efficiency gains.

Jevons called it a paradox. And it’s why we’re confident that Google TurboQuant will not kill memory demand.

Here’s how we see the Jevons paradox playing out for AI memory specifically:

Channel 1: Context Window Expansion

Right now, long-context AI inference is brutally expensive because KV cache memory scales linearly with context length. That cost constraint has been a real ceiling on how ambitiously developers deploy long-context models. TurboQuant effectively makes the same GPU that currently supports a 100K-token context window capable of supporting a 600K-token context window – for free.

The moment that reaches widespread deployment, a massive wave of applications that weren’t economically viable suddenly become viable: deep document analysis across entire legal libraries, persistent AI agents with genuinely long memory, complex multi-step reasoning chains. All of those new applications consume more total compute and memory than the constrained baseline.

The efficiency gain doesn’t reduce the memory market – it expands it into territory that was previously off-limits.

Channel 2: New Application Categories

Cheaper inference means more inference. Every major reduction in inference cost has historically triggered a more-than-proportional expansion in what developers actually build. When OpenAI slashed GPT-3.5 Turbo API pricing through 2023, developers who had been prototyping suddenly deployed at scale – and entirely new application categories emerged almost overnight. AI writing tools, coding assistants, and customer service bots went from niche experiments to mainstream products not because the technology improved, but because the economics finally made sense. TurboQuant is the same forcing function for a new tier of applications.

The ceiling for AI capabilities has been cost. Lower that cost, and you unlock demand tiers that simply didn’t exist before.

Channel 3: Edge and Mobile AI

TurboQuant enables meaningful LLM inference on devices with far less memory than today’s data center GPUs. One benchmark showed that a 3-bit KV cache could make 32K-plus token contexts feasible on mobile phones. That means the addressable market for memory in an on-device AI world is potentially larger than the data center market.

Efficiency enabling edge deployment is a demand expansion story, not a demand destruction story. In fact, the market was handed a near-identical lesson just months ago – and most investors have already forgotten it.

The DeepSeek Playbook: What the Last AI Efficiency Panic Got Wrong

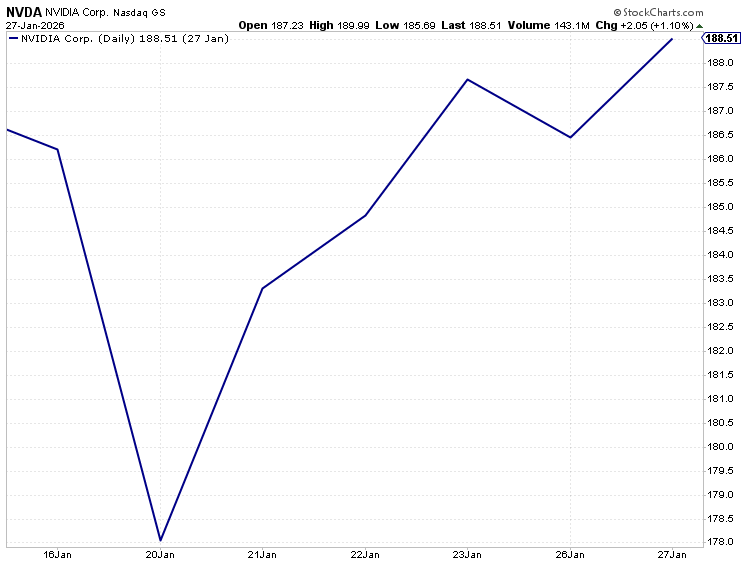

In early 2026, DeepSeek published a paper showing you could train frontier-quality AI models at a fraction of the cost.

The market’s immediate reaction? Sell Nvidia (NVDA). Sell AI infrastructure. Panic.

What actually happened: hyperscalers immediately used the efficiency gains to run more inference at greater scale. Capex guidance went up, not down. The dip became one of the most obvious buying opportunities of the year, and AI infrastructure stocks subsequently ripped.

TurboQuant is the same dynamic applied to memory. Right now, the market is selling memory stocks because AI will need less memory per query. But the real question isn’t “how much memory per query?” It’s, “how many queries?”

As cheaper inference unlocks an ocean of new use cases, exponentially more.

Now, there’s one distinction worth flagging. Unlike DeepSeek, which was a deployed model developers could download and run the day the paper dropped, TurboQuant is still pre-production – real-world integration across hyperscaler infrastructure is likely 12 to 24 months out.

But the direction looks the same. And the valuation setup for memory stocks right now makes the entry point arguably even more compelling.

The AI Memory Stock Selloff Makes No Analytical Sense

Set Jevons aside entirely. Even accepting the bear’s core premise – that TurboQuant will reduce KV cache memory demand – the selloff in SNDK and STX is still nonsensical.

TurboQuant compresses the KV cache, which lives in GPU HBM and DRAM. That’s the domain of Micron and SK Hynix.

SanDisk is primarily a NAND flash company. Seagate is an HDD company. Neither has meaningful HBM exposure.

The fact that SNDK and STX sold off as hard as MU tells you everything you need to know: this is panic-driven, not analytical.

The market is pattern-matching on “AI efficiency breakthrough = sell memory” without distinguishing between what type of memory is actually affected.

That’s the kind of indiscriminate selling that creates generational entry points.

The Bottom Line

AI memory stocks have been punished by a confluence of macro headwinds – now-fading geopolitical uncertainty from the Iran conflict – and an algorithm-driven panic that misreads a genuine efficiency improvement as a demand destruction event.

SemiAnalysis memory analyst Ray Wang put it plainly: it will be “hard to avoid higher usage of memory” as a result of improving model performance. And Quilter Cheviot‘s technology head Ben Barringer called TurboQuant “evolutionary, not revolutionary – it does not alter the industry’s long-term demand picture.”

We agree.

The Jevons Paradox is about to take its revenge on everyone who sold AI memory stocks because Google figured out how to make AI more efficient. History is littered with investors who made exactly this mistake – who sold the shovels because gold became easier to find, then watched the gold rush accelerate instead.

Don’t sell the shovels. This gold rush is just getting started.

What Smart Money Does While Everyone Else Panics

The memory stocks getting sold off today are the shovels of this gold rush — and we’ve argued they’re being thrown away at exactly the wrong moment. But if the real Jevons rebound plays out the way we expect, the next leg of this AI bull market won’t just reward the infrastructure. It’ll reward the platforms built on top of it…

Which brings us to the company at the center of it all.

Every efficiency breakthrough we’ve discussed – TurboQuant, DeepSeek, cheaper inference unlocking new application tiers – ultimately accelerates demand for one thing: AI platforms capable of deploying at scale. And no company is better positioned to capture that demand than OpenAI.

Most investors are waiting for the IPO. That’s the wrong move. The biggest gains in generational companies don’t go to investors who buy on listing day — they go to investors who found a way in before the crowd arrived.

We have identified a way to stake a claim in OpenAI right now, before any IPO is announced, for under $10.

When OpenAI goes public at an expected $1 trillion valuation and gets added to the S&P 500, the wave of institutional buying alone will be historic. The window to get in ahead of that moment is open right now – but it won’t stay open forever.

Click here to see that pre-IPO play before it’s too late.