The Nvidia Acquisition Rumor Shouldn’t Be Ignored

Something strange just happened in the market. And it had nothing to do with geopolitics, inflation, or macro noise.

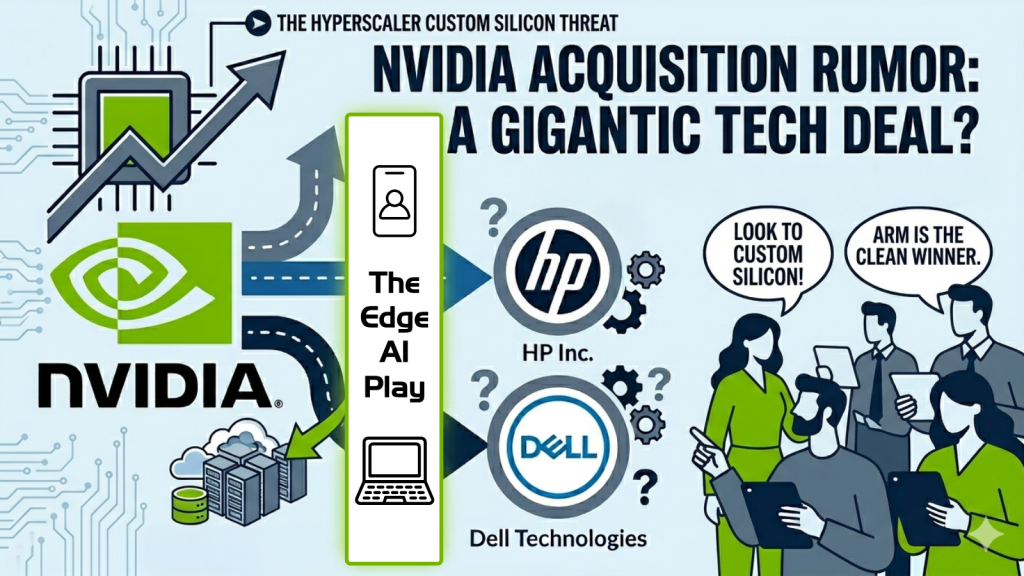

Instead, acquisition rumors surrounding tech titan Nvidia (NVDA) were what was making waves this week.

A new report from tech news website SemiAccurate claimed that Nvidia was in negotiations to acquire “a large company” that would “reshape the PC landscape.” That led shares of Dell Technologies (DELL) and HP Inc. (HPQ) – two of the biggest personal computer companies – to suddenly surge. Dell jumped as much as 7.6% early this week. HP popped as much as 6.3%.

But it didn’t take long for Nvidia to quash the rumor. A spokesperson quickly told Tom’s Hardware: “The media report is false; Nvidia is not engaged in discussions to acquire any PC maker.”

One niche tech blog, one unnamed source, and a couple of stocks jumping because Wall Street will trade anything.

Nothing to see here… right?

What Nvidia Actually Is (And Why the Rumor Sounds Wrong)

To understand why the rumor isn’t as absurd as it sounds, it helps to understand what Nvidia actually is – and where it may be quietly trying to go.

From Gaming GPUs to AI Infrastructure Dominance

Nvidia makes graphics processing units (GPUs) – specialized chips originally designed to render video game graphics. It turns out that the same mathematical properties that make GPUs great at rendering also make them proficient at training artificial intelligence models. So when the AI boom hit, Nvidia found itself holding the keys to the kingdom. It is now the most valuable company in the world, with a $4.87 trillion market cap.

Its chips – the H100 and the Blackwell series – are the primary engine powering virtually every major AI system on the planet, from ChatGPT to Google Gemini to Meta‘s (META) Llama. Every big tech company is spending hundreds of billions of dollars building data centers stuffed floor-to-ceiling with Nvidia hardware.

That means that Nvidia is, in short, a data center company. It has absolutely nothing to do with selling laptops and desktop computers to regular people, which is what makes this acquisition rumor seem so bizarre.

Why Nvidia Might Target PC Makers Anyway

HP and Dell are two of the world’s largest PC manufacturers. HP has roughly 19% of the global PC market. Dell has about 17%. They’re enormous, well-known businesses; businesses with notoriously thin profit margins, complex global supply chains, and deeply commoditized products.

Nvidia’s gross margins hover around 70- to 75%. Dell’s are closer to 22%. HP’s are similar.

Acquiring either would be like a Michelin-star restaurant buying a fast-food chain. The economics, margins, and operating models don’t line up.

So why would Jensen Huang – one of the savviest executives in the history of the technology industry – even consider this?

The answer, if there is one, isn’t that Nvidia suddenly wants to sell PCs. It’s that Nvidia is trying to secure the next battlefield for AI computing before a threat becomes obvious to everyone else.

The Strategic Logic Behind a ‘Bad’ Acquisition

Right now, Nvidia dominates the training of AI models — the initial, enormously expensive process of teaching an AI system on vast quantities of data. Nvidia’s GPUs are unmatched for this task, and every major AI lab on Earth uses them.

But what happens when a model is already trained? Every time you ask ChatGPT a question, every time Google summarizes a search result, every time an AI agent writes a piece of code – that’s called inference. And inference is where the economics of AI compute get complicated for Nvidia.

See, the big cloud companies – Alphabet (GOOGL), Amazon (AMZN), Microsoft (MSFT), Meta – have spent the last several years building their own custom chips specifically designed for inference. Google has its TPUs. Amazon has Trainium and Inferentia. Microsoft has the Maia chip. Meta has its MTIA silicon. These chips aren’t as versatile as Nvidia’s GPUs. But for running an already-trained model, they can be just as fast at a fraction of the cost.

In other words, for the fastest-growing segment of AI compute – inference – the hyperscalers are methodically reducing their reliance on Nvidia.

The Next Battlefield: Edge AI Computing

If the cloud layer is becoming contested, where does Nvidia look next? The answer Jensen Huang has been signaling publicly for a while is the edge – meaning the AI compute that happens locally, on your own device, rather than in a remote data center.

AI models are getting smaller and more efficient every year. What required a warehouse full of servers two years ago can run on a high-end laptop chip today. As that trend continues, more and more AI inference will happen on the device in your hands rather than in some distant data center. Your PC, phone, and laptop become the AI engine.

If that’s the future, then whoever controls the chip inside that device controls the next era of AI computing. And right now, Nvidia doesn’t control that chip.

Apple (AAPL) controls it in its own devices, with the M-series processors. Qualcomm (QCOM) is pushing hard into Windows PCs with Snapdragon X. AMD (AMD) is making noise. Even Intel (INTC), fighting for relevance, is trying.

Buying Dell or HP – two companies that collectively ship hundreds of millions of PCs – would give Nvidia a direct distribution channel for whatever edge AI chip it wants to deploy. It would be Nvidia planting a flag before the landscape gets crowded.

What the Nvidia Rumor Really Signals

The most telling thing about this rumor isn’t the target but the logic behind it.

Companies that are genuinely confident in their competitive position don’t typically make massive, operationally disruptive acquisitions into adjacent low-margin businesses. Microsoft didn’t buy a PC company when it was winning with Windows. Intel didn’t buy a phone carrier when it was dominant in desktop processors.

If Nvidia is even considering a move like this, the most logical explanation is that its perception of its own cloud moat is more cautious than the public narrative suggests. The custom silicon threat – i.e. Google’s TPUs, Amazon’s Trainium, and the whole hyperscaler ‘de-Nvidia-fication’ attempt – may be progressing faster and cutting deeper than Huang lets on during earnings calls.

Who Wins If Nvidia Loses Ground

If Nvidia is privately realizing that custom silicon is eating its inference lunch at the cloud layer, the natural question is, who builds all that custom silicon?

The answer: Broadcom (AVGO) and Marvell (MRVL).

Broadcom is the dominant designer of custom AI accelerator chips (ASICs) for the hyperscalers. Google’s TPUs, for example, run on Broadcom-designed silicon. As every major cloud company accelerates its push away from Nvidia and toward proprietary chips, Broadcom’s order book gets fatter. The company has already projected $100 billion-plus in AI revenue by 2027. If the de-Nvidia-fication trend steepens, that number could prove conservative.

Marvell is playing the same game at a slightly earlier stage, building custom data infrastructure silicon and targeting a $15 billion revenue run rate by fiscal 2028. Same tailwind, slightly more upside optionality.

And then there’s Arm Holdings (ARM), which is perhaps the cleanest winner of all. Arm doesn’t make chips — it designs the fundamental architecture that virtually every edge chip is built on. The CPUs paired with those inference chips? Increasingly Arm – AWS Graviton, Google Axion, Microsoft Cobalt. Arm collects a royalty on all of it. No matter who wins the custom silicon wars, it still gets paid.

The Bottom Line: The Clock Is Ticking on Nvidia’s Dominance

Maybe the Nvidia rumor is nothing; a speculative blog post that sparked a one-day trade.

But the logic embedded within that rumor – that Nvidia sees the inference layer escaping its grasp and is looking for a new front to defend – is worth taking seriously regardless of whether a deal ever happens.

The market has been pricing Nvidia as if its data center dominance is permanent and unchallengeable. The custom silicon buildout at the hyperscaler layer, the migration of AI toward smaller and more efficient edge models, and now this strange PC acquisition rumor all point in the same direction: the clock is ticking on that dominance.

If this is right, the takeaway isn’t just about Nvidia.

It’s about who actually gets access to the most important parts of this shift… and who doesn’t.

The market has a habit of opening the door only after the structure is already built – after the winners are clearer, and the easy gains are gone.

And right now, some of the most important pieces of the AI stack still sit outside that door.

OpenAI is one of them.

Not widely accessible. Not fully priced. Not yet part of the public market narrative.

Which raises a different kind of question: What does it look like to position before access becomes easy?

We’ve spent some time digging into that – how it might happen, and what the window looks like before it does.

Here’s what we’ve found.